GenAI Ethics & Governance for Leaders by Freddie Seba.

What’s new (in simple terms):

Hospitals are wiring AI into day-to-day care, pharma is building “AI factories,” and researchers are testing whether models can reason about language—and even notice their own “thinking.” Markets are hot, but concentration risk is a genuine concern. The job for leaders: turn spectacle into stewardship.

This week’s signals (quick reads)

- Hospitals: From pilots to platform. Stanford’s ChatEHR enables clinicians to ask chart questions and receive answers—right within their clinical systems. The pattern: keep patient data within the hospital, use standard connections, and conduct ongoing evaluations (MedHELM) so the system is continually measured as it rolls out. Leader takeaway: Copy the platform pattern, not the press release. Ship with monitoring and a kill-switch on day one.

- Time back to clinicians: Stanford Medicine’s Priya Singh describes how AI reduces paperwork and documentation, allowing doctors to spend more time with patients. That “time back” is the ROI that matters. Leader takeaway: Track minutes saved per visit, error rates, and equity impacts across patient groups.

- Pharma’s “AI factory” moment: Eli Lilly is teaming with NVIDIA on a supercomputer and opening TuneLab, a platform that lets smaller biotechs use Lilly’s discovery models—moving from one-off pilots to production. Leader takeaway: Scale governance with the hardware by implementing access controls, provenance logs, and cluster-level shutdowns.

- Language that reasons about language. Quanta reports models tackling sentence structure, ambiguity, and recursion—closer to how trained linguists analyze text. Great for drafting and review, but still needs human checks. Leader takeaway: Use for legal/policy/scientific writing support—paired with source tracking and human verification.

- “Introspection” research: New studies test whether models can detect and report internal signals. Early, promising for diagnostics—but limited and unreliable today. Treat it as a signal, not as ground truth.

- The build is global, not just “rich-world”. The AI boom is spreading to Indonesia, India, Thailand, and beyond, driven by data-localization and “sovereign AI” goals. Leader takeaway: Plan for local data rules, local compute, and community benefits in procurement.

- Bubble & concentration watch: Capex is massive, and leadership is narrow. Proper caution from market watchers: separate heat from readiness; ask for audited results, not vibes.

Leader playbook (the 12 Ps—plain-English edition)

- Purpose — Only deploy AI where it clearly helps people and the mission.

- Problems — Solve real needs; skip shiny demos.

- Profits — Create value without passing harm to others.

- People — Protect patients, learners, workers, and communities.

- Planet — Count energy, water, and siting in your total cost.

- Process — Govern the whole lifecycle; use living testbeds (e.g., MedHELM).

- Policy — Design for sector rules and data-localization up front.

- Protections — Safety rails and a kill-switch from day one.

- Privacy — Keep sensitive data inside; route AI through a secure gateway.

- Provenance — Log model, prompts, sources, and outputs for audit.

- Preparedness — Drill failure modes (hallucinations, drift, misuse).

- Product Ownership — Name one accountable owner with pause/rollback authority.

Gratitude

In gratitude to the University of San Francisco, Stanford HAI, Coalition for Health AI (CHAI), AMIA, AAC&U, AAAI, and USF Schools of Education and Health Professions for leadership in human-centered AI education.

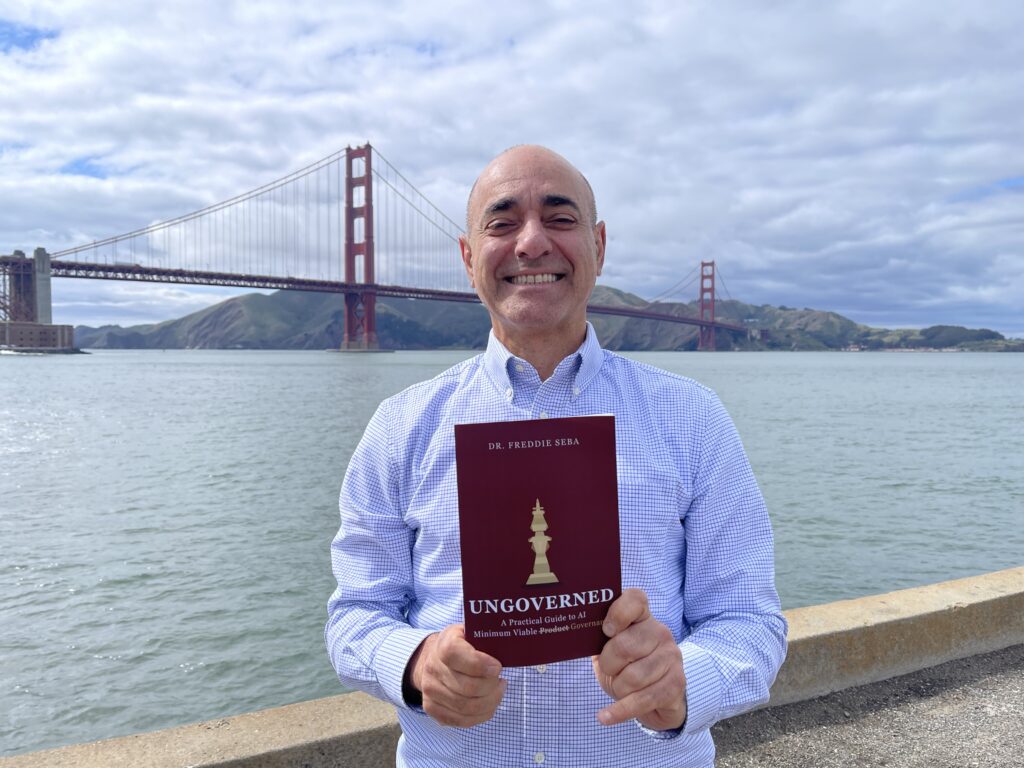

About the author

Freddie Seba is an author, speaker, and EdD doctoral candidate (USF) focused on GenAI Ethics & Governance for Leaders; MBA (Yale), MA (Stanford IPS); former USF faculty and Digital Health Informatics Program Director; Silicon Valley executive and entrepreneur. Speaking/briefings: Connect on LinkedIn or visit freddieseba.com.

References & useful reading

- Stanford Medicine — Clinicians can “chat” with medical records through new AI software, ChatEHRhttps://med.stanford.edu/news/all-news/2025/06/chatehr.html

- Stanford HAI — How to Build a Safe, Secure Medical AI Platformhttps://hai.stanford.edu/news/how-to-build-a-safe-secure-medical-ai-platform

- Stanford CRFM — MedHELM (living medical evaluation benchmark)https://crfm.stanford.edu/helm/medhelm/latest/

- MedHELM paper (arXiv)https://arxiv.org/abs/2505.23802

- The Economic Times — AI helping doctors spend more time with patients, cut down on paperwork: Stanford Medicine’s Priya Singhhttps://m.economictimes.com/industry/healthcare/biotech/healthcare/ai-helping-doctors-spend-more-time-with-patients-cut-down-on-paperwork-stanford-medicines-priya-singh/articleshow/125015549.cms

- Reuters — Lilly partners with Nvidia on AI supercomputer to speed up drug developmenthttps://www.reuters.com/business/healthcare-pharmaceuticals/lilly-partners-with-nvidia-ai-supercomputer-speed-up-drug-development-2025-10-28/

- Reuters — Lilly launches AI-powered platform to accelerate drug discovery (TuneLab)https://www.reuters.com/business/healthcare-pharmaceuticals/lilly-launches-ai-powered-platform-accelerate-drug-discovery-2025-09-09/

- PR Newswire — Lilly launches TuneLab platform…https://www.prnewswire.com/news-releases/lilly-launches-tunelab-platform-to-give-biotechnology-companies-access-to-ai-enabled-drug-discovery-models-built-through-over-1-billion-in-research-investment-302550603.html.

- NVIDIA Blog — Lilly Deploys World’s Largest, Most Powerful AI Factory…https://blogs.nvidia.com/blog/lilly-ai-factory-nvidia-blackwell-dgx-superpod/

- Quanta Magazine — In a First, AI Models Analyze Language As Well As a Human Expert (Oct 31, 2025)https://www.quantamagazine.org/in-a-first-ai-models-analyze-language-as-well-as-a-human-expert-20251031/

- Transformer Circuits — Emergent Introspective Awareness in Large Language Modelshttps://transformer-circuits.pub/2025/introspection/index.html

- Anthropic — Emergent introspective awareness in large language models (research note)https://www.anthropic.com/research/introspection

- The Wall Street Journal — It’s Not Just Rich Countries. Tech’s Trillion-Dollar Bet on AI Is Everywherehttps://www.wsj.com/tech/ai/its-not-just-rich-countries-techs-trillion-dollar-bet-on-ai-is-everywhere-1781a117

- Financial Times — Credit market hit with $200 bn’ flood’ of AI-related issuancehttps://www.ft.com/content/82f63f23-db20-4f6c-84f1-e7b45ca09f46

- Net Interest (Marc Rubinstein) — Bubble Trouble: AI, Capex and the Anatomy of a Bubblehttps://www.netinterest.co/p/bubble-trouble

Transparency & copyright

Drafted and refined with generative tools (ChatGPT, Grammarly). Synthesis, structure, and voice are the author’s.

© 2025 Freddie Seba | All rights reserved | GenAI Ethics & Governance for Leaders