What Hiring Signals, Real Limits of Autonomy, Market Power, and Trust Infrastructure Tell Us About What Comes Next

—AI Ethics & Governance for Growth. A Guide for Leaders, Boards & Trustees

By Freddie Seba

© 2026 Freddie Seba. All rights reserved.

Executive Signal

One of the most encouraging AI signals this week did not come from a new model or benchmark.

It came from a hiring insight.

In an interview with Fortune, Daniela Amodei, cofounder of Anthropic, made a simple but powerful point:

As AI accelerates technical tasks, human judgment, interpretation, and context become more—not less—valuable.

Humanities majors and so-called “soft skills” are not being displaced. They are being re-valued.

This reframes the AI debate away from “humans vs. machines” and toward a more durable governance question:

What must remain human—and how do we design systems that protect that responsibility as AI scales?

Programming Update

Starting today (Monday), AI Ethics & Governance for Growth moves to a Monday publication schedule.

The new rhythm:

- Monday: Newsletter — framing the week’s governance questions

- Mid-week: Podcast — deeper discussion and practitioner perspective

- When appropriate, Friday: Short clip or reflection — one signal to carry forward

The goal is simple: less noise, more clarity.

What’s Driving This Shift (Plain-Language Signals)

AI Is Becoming Infrastructure—and Infrastructure Shapes Power

Recent reporting shows that advanced AI now requires massive, sustained investment in compute, data centers, energy, and talent. This level of capital intensity concentrates power among a small number of firms and cloud providers.

Policy analysts are increasingly questioning whether existing competition and data laws are sufficient as cloud hyperscalers become unavoidable dependencies.

Why it matters:

Infrastructure decisions quietly determine who has leverage, who bears risk, and who sets the rules.

Autonomy Has Limits—and That’s a Feature, Not a Bug

Despite impressive demos, researchers continue to show that today’s AI systems struggle with basic physical reasoning. This limits true autonomy in robotics, manufacturing, and other safety-critical domains—even as long-term investments continue in humanoid robots and embodied AI.

Why it matters:

Human oversight is not a temporary bridge. It is a durable requirement—and governance should be designed accordingly.

Markets and Policymakers Are Beginning to Push Back

Signals from the U.S. and Europe suggest a shift from permissive experimentation toward guarded preparation:

- U.S. states are considering targeted AI legislation.

- European analysts warn that digital sovereignty depends on investment in open-source infrastructure and talent, not regulation alone.

- Oversight groups are publishing increasingly blunt assessments of systemic AI risk.

Why it matters:

AI governance is moving from voluntary principles toward enforceable expectations—unevenly, but unmistakably.

Education and Healthcare Reveal Reality First

Teachers, clinicians, and caregivers encounter AI’s benefits and failures long before courts or regulators do.

In education, the concern is less about cheating and more about how AI shapes language, reasoning, and learning quality.

In healthcare, misuse and over-reliance—not just technical error—are emerging as primary risks.

Why it matters:

When AI fails in education or healthcare, trust is difficult to restore.

From the Podcast: AI Governance with Dr. Freddie Seba

Episode 5 — Live This Week

The Substrate of Trust — AI + Verification in Plain Language

As AI systems recommend, route, approve, and transact, trust can no longer rely on reputation or “just trust the platform.”

This episode focuses on verification: digital trust rails that make actions and records checkable—who did what, when, and under what rules.

Guest:

Rohan Handa (Mysten Labs)

Previously: BBVA, Horizen Labs Ventures, and EY

Author of The Substrate

Available on Apple Podcasts, YouTube, and Spotify.

Board, Leader, and Public Takeaway

The most important signal this week is not that AI is accelerating.

It is that human judgment is being re-evaluated. AI will reshape work, education, and institutions—but it does not eliminate responsibility, context, or accountability. Those remain human obligations.

The organizations that succeed will not be the ones that panic or over-automate.

They will be the ones that design systems where human judgment stays central—and verifiable.

References and Links in the First Comment, as per LinkedIn algos optimization

Gratitude @University of San Francisco, @AMIA Informatics, @Stanford HAI, @Coalition for Health AI, @American Association of Colleges and Universities

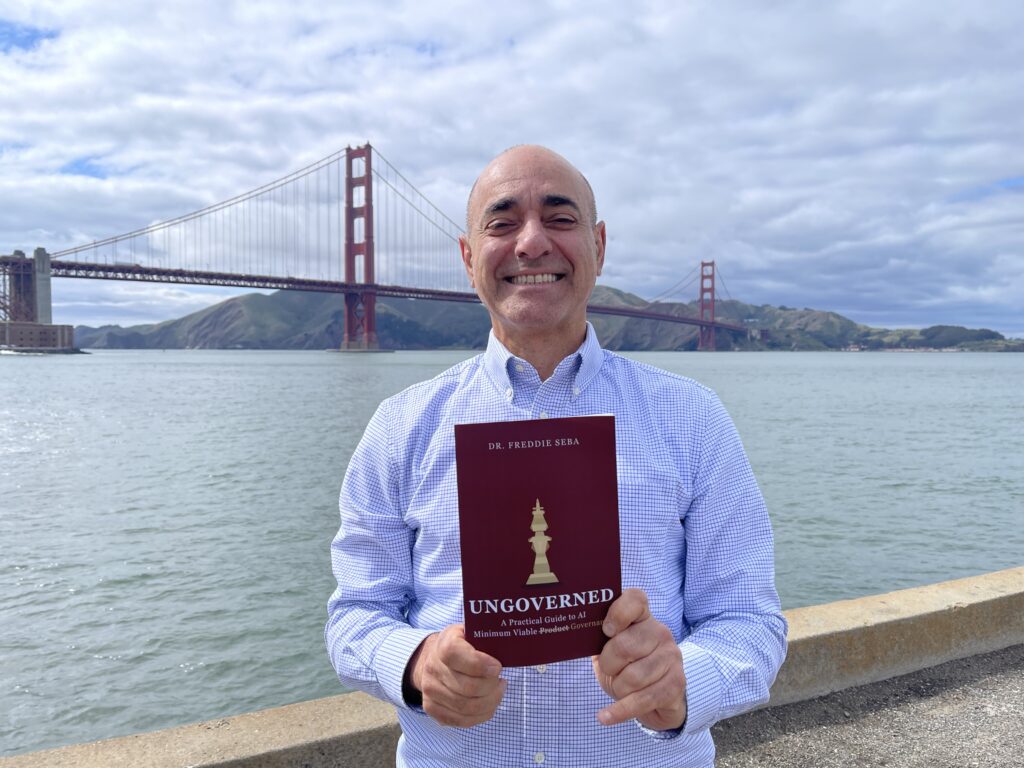

About the Author

Freddie Seba is a researcher and practitioner focused on AI ethics and governance for leaders across higher education, healthcare, and financial services.

He holds an MBA (@Yale University), an MA (@Stanford University), and an EdD in Organization and Leadership (@University of San Francisco), with a dissertation on AI ethics and governance defended in Fall 2025.

He writes AI Ethics & Governance for Leaders, Boards & Trustees and hosts the companion podcast AI Governance with Dr. Freddie Seba, translating practitioner signals into board-ready oversight: decision rights, risk tiering, vendor accountability, monitoring, and incident preparedness.

Corporate Events + Executive Audiences

I keynote on AI governance, risk, trust infrastructure, and institutional legitimacy.

As an AI thought leader speaker, my talks bring strategic framing and practical takeaways for boards and senior leadership—accountability, transparency, safety, responsible adoption in regulated environments, judgment under uncertainty, escalation design, and governance maturity—across business and educational engagements, executive briefings, and board workshops: inventory → tiering → controls → dashboards → incident drills.

To book an AI speaker keynote, AI corporate event talk, AI executive briefing, or AI board workshop: connect via freddieseba.com.

And please subscribe to the newsletter and follow the podcast.

Transparency + Disclaimer

Educational content only. This newsletter does not constitute legal, medical, clinical, insurance, financial, or professional advice.

Drafted and refined with AI-assisted tools for synthesis and clarity. Final editorial control and responsibility remain with the author.

© 2026 Freddie Seba. All rights reserved.

References & Further Reading — Issue #56

- Fortune — Anthropic cofounder says humanities majors and soft skills matter more in the AI erahttps://fortune.com/2026/02/07/anthropic-cofounder-daniela-amodei-humanities-majors-soft-skills-hiring-ai-stem/

- The Wall Street Journal — AI spending across major tech companieshttps://www.wsj.com/tech/ai/ai-spending-tech-companies-compared-02b90046

- The Economist — Stop panicking about AI. Start preparinghttps://www.economist.com/leaders/2026/01/29/stop-panicking-about-ai-start-preparing

- The Economist — A social network for AI agentshttps://www.economist.com/business/2026/02/02/a-social-network-for-ai-agents-is-full-of-introspection-and-threats

- Stanford HAI — AI can’t do physics wellhttps://hai.stanford.edu/news/ai-cant-do-physics-well-and-thats-a-roadblock-to-autonomy

- NEJM AI — Clinical realities of AI deploymenthttps://ai.nejm.org/doi/full/10.1056/AIp2501266

- ECRI — Misuse of AI chatbots as a health technology hazardhttps://home.ecri.org/blogs/ecri-news/misuse-of-ai-chatbots-tops-annual-list-of-health-technology-hazards

- IMF — The hidden price of datahttps://www.imf.org/en/publications/fandd/issues/2025/12/the-hidden-price-of-data-laura-veldkamp

- The Verge — New York considers bills to rein in AIhttps://www.theverge.com/ai-artificial-intelligence/875501/new-york-is-considering-two-bills-to-rein-in-the-ai-industry

- Tech Policy Press — DMA, Data Act, and cloud hyperscalershttps://techpolicy.press/can-europes-digital-markets-act-and-data-act-rein-in-cloud-hyperscalers

- Tech Policy Press — EU digital sovereignty and open sourcehttps://techpolicy.press/eus-digital-sovereignty-depends-on-investment-in-opensource-and-talent

- Tech Oversight Project — Top AI oversight report (Jan 2026)https://techoversight.org/2026/01/25/top-report-mdl-jan-25/

- Center for AI and Digital Policyhttps://www.caidp.org/

#AIGovernance #ResponsibleAI #BoardOversight #Trustees #ExecutiveLeadership #HumanJudgment #AIandTrust #Verification #DataGovernance #RiskManagement #AIinEducation #HealthcareAI #TechPolicy