This week’s most important AI signal didn’t come from a benchmark.

It came from a doctrine lesson.

Retired Gen. @David Petraeus put it plainly:

“Lessons are not learned when they are identified… Rather, they are only learned when you develop new concepts, write new doctrine, change organizational structures, overhaul your training, refine leader development courses, set out new materiel requirements that drive the procurement process, and even make changes to your personnel policies, recruiting, and facilities.”

That applies directly to AI governance.

Innovation is accelerating.

But governance only matures when institutions change.

So the leadership question becomes:

Are we identifying AI risks — or redesigning our institutions to manage them?

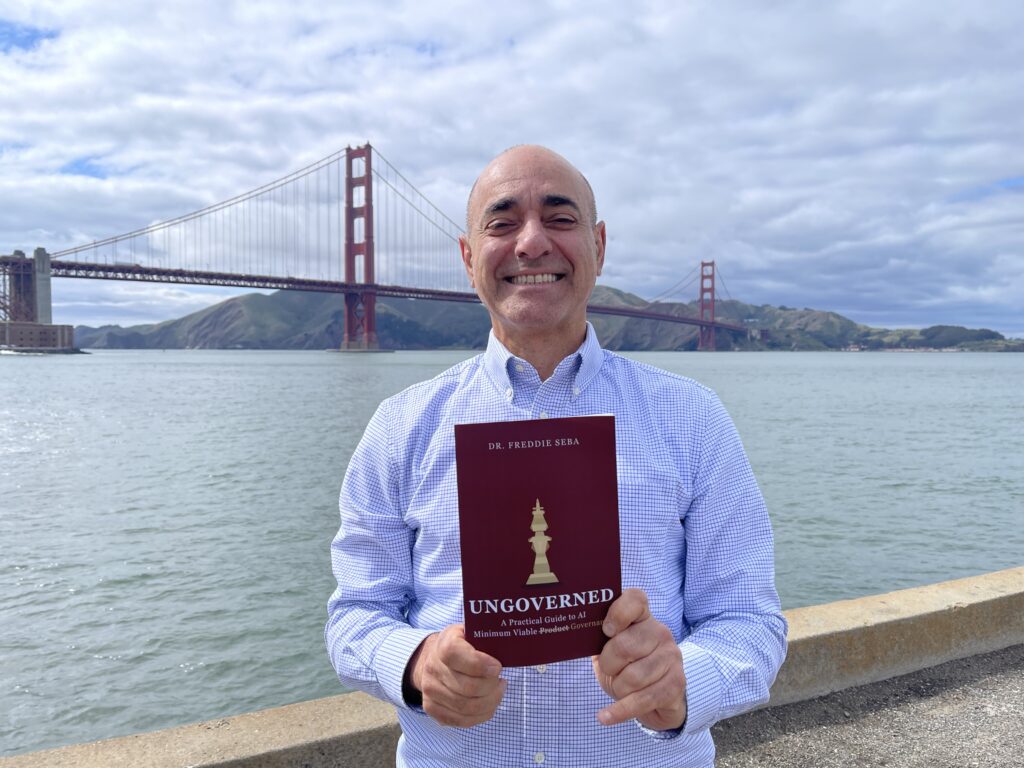

Since starting this doctoral journey in Fall 2022 — right as ChatGPT/GenAI exploded — I made myself a promise:

Produce meaningful, practice-ready knowledge on AI ethics and governance, and disseminate it beyond academia so that organizations and people can benefit.

That is what this newsletter (and the podcast) is for.

I’m also deep in several academic publishing projects, exploring new opportunities across academia, industry, and the speaking circuit — and working toward a book launch soon to translate this work into a Practical Guide to AI Oversight for Boards, Trustees, and Executive Leadership.

The Seba Framework — The 12 Ps of Responsible AI Oversight ©

(How I discern signal from noise)

Purpose — Mission alignment vs. cost extraction

Problems — Decision-relevant framing (not metric chasing)

Profits — Who benefits vs. who bears risk

People — Students, patients, workers; lived impacts

Planet — Energy, compute, and scale costs

Process — Lifecycle monitoring and incident learning

Policy — Risk-specific rules (health, youth, education, employment)

Protections — Vulnerable populations and escalation paths

Privacy — Enforceable limits on data use and training

Provenance — Traceability of data, models, vendors

Preparedness — Board competence and governance cadence

Product Ownership — Institutions own outcomes once AI acts

This week’s core signal:

Acceleration without institutional redesign creates intensity without integrity.

1) Innovation Is Scaling — Structure Is Lagging

Recognition and momentum continue:

- @Forbes released Forbes 250: America’s Greatest Innovators (2026)

- NVIDIA is positioning financial services as a frontline for enterprise AI adoption (GTC sessions signal)

- Deep research continues to compound (e.g., #GoogleDeepMind’s “Deep Think” framing for scientific discovery)

Meanwhile, the #UKGovernment published AI Opportunities Action Plan — One Year On.

Strategy documents are multiplying.

Structural redesign is slower.

Boards should ask:

- Has AI oversight moved from innovation teams to enterprise risk?

- Have we updated procurement doctrine — not just “guidelines”?

- Is vendor concentration mapped and stress-tested?

- Are AI “platform bets” treated as financial concentration risk?

Acceleration without redesign creates fragility.

2) Work: AI Doesn’t Reduce Work — It Intensifies It

A critical new signal from @Harvard Business Review:

AI often doesn’t “remove work.”

It intensifies it — expanding scope, speeding expectations, increasing coordination overhead, and blurring boundaries.

This is a governance issue, not a productivity anecdote.

Under the Seba Framework:

People — burnout, role confusion, and hidden labor

Process — workflow redesign + monitoring, not tool adoption

Preparedness — leaders must govern pace, not just pilots

3) Education: Harness or Prohibit? (Now with Agentic AI)

A recent @The New York Times opinion captures the institutional split:

Some educators harness AI to boost engagement and creative exploration.

Others prohibit it entirely — leaving students to figure out ethical use on their own.

Then a second education signal raises the stakes:

Agentic AI can navigate portals, mimic user actions, and complete tasks autonomously — turning “academic integrity” into a security + identity governance problem.

Under the Seba Framework:

Purpose — is AI serving learning?

Policy — enforceable + realistic rules

Protections — vulnerable learners and institutional trust

Privacy — student data redlines

Preparedness — faculty + trustees must be agentic-AI literate

4) Healthcare: Legitimacy Is the Benchmark

Healthcare remains the sharpest test of governance because the harms are real, personal, and often invisible.

Two distinct signals are converging:

A) Consumer health: multiple reports warn that health advice from AI chatbots is frequently wrong in real-world use.

B) Payer health: Stanford HAI’s policy brief argues that weak governance in health insurance utilization review could amplify wrongful denials and weak accountability.

I’m grateful to engage regularly with leaders across:

@Coalition for Health AI

@University of Illinois Chicago Applied Health

@American Association of Colleges and Universities

Under the Seba Framework:

Process — monitoring + incident learning

Policy — risk-specific rules for health decisions

Protections — escalation paths that work

Product Ownership — institutions own outcomes once AI acts

5) Sovereign AI + Public Sector Adoption (The “New Governance Geography”)

Stanford HAI argues we may be entering a new economic order shaped by “sovereign AI” and alliances among mid-sized nations.

And in Europe, GENAI4LEX-B signals a parallel trend:

public institutions experimenting with GenAI to support legislative work — with governance implications for transparency, traceability, and democratic legitimacy.

Under the Seba Framework:

Provenance — traceability for data/tools in public reasoning

Policy — rules for decision-adjacent AI

Preparedness — governance capacity is a national infrastructure

6) Trust, Verification, and the Hoax Problem

A Platformer investigation debunked a viral “Uber Eats whistleblower” story that fooled large audiences.

This is not just “media drama.”

It is a governance warning:

In an AI environment, verification becomes infrastructure.

And institutions that cannot quickly validate evidence will be governed by narratives rather than facts.

Under the Seba Framework:

Provenance — evidence authenticity + chain-of-custody

Process — escalation and verification protocols

Preparedness — operational readiness for information shocks

7) Market Signals: Tools, Talent, Hardware

Several market signals reinforce the same theme: acceleration is easy; durable operations are hard.

- Dev tooling: a record $60M seed round for an AI dev tool startup signals “managing AI output” is becoming its own category.

- Hardware: reports suggest OpenAI’s Jony Ive–designed device timeline has pushed to 2027, and legal realities constrain branding.

- Talent: Business Insider reports continued cofounder departures at xAI — a reminder that execution and governance strain even “frontier” teams.

Boards should treat these as governance signals:

The bottleneck is not intelligence — it’s reliability, accountability, and institutional redesign.

Podcast Signal (mid-week)

AI Governance with Dr. Freddie Seba

Practical Guide to AI Oversight for Boards, Trustees, and Executive Leadership.

Managing AI Governance and Ethics in a Growth Environment.

Real. Bold. Impactful — in Plain Language.

Available on Spotify, YouTube, Apple Podcasts, and Substack.

This week’s conversation:

Making AI the Reliability Layer — Optimizing for Model Speed, Efficiency, and Trustworthiness.

Guest: Victor Chapela — CEO & Cofounder (soon to move from a stealth startup in Silicon Valley)

Boards and executives — come ready.

Programming Reminder

Monday: Newsletter — framing the week’s governance questions

Mid-week: Podcast — practitioner perspective

When appropriate: Friday short clip or reflection

Gratitude

@University of San Francisco

@AMIA Informatics

@Coalition for Health AI

@University of Illinois Chicago Applied Health

@American Association of Colleges and Universities

Tech Policy Press community

About the Author

Freddie Seba is a researcher and practitioner focused on AI ethics and governance for leaders across higher education, healthcare, and financial services.

He holds an MBA (@Yale University), an MA (@Stanford University), and an EdD in Organization and Leadership (@University of San Francisco), with a dissertation on AI ethics and governance defended in Fall 2025.

He writes AI Ethics & Governance for Leaders, Boards & Trustees and hosts the companion podcast AI Governance with Dr. Freddie Seba, translating practitioner signals into board-ready oversight: decision rights, risk tiering, vendor accountability, monitoring, and incident preparedness.

Corporate Events + Executive Audiences

I keynote on AI governance, risk, trust infrastructure, and institutional legitimacy.

As an AI thought leader speaker, my talks bring strategic framing and practical takeaways for boards and senior leadership—accountability, transparency, safety, responsible adoption in regulated environments, judgment under uncertainty, escalation design, and governance maturity—across business and educational engagements, executive briefings, and board workshops: inventory → tiering → controls → dashboards → incident drills.

To book an AI speaker keynote, AI corporate event talk, AI executive briefing, or AI board workshop: connect via freddieseba.com.

And please subscribe to the newsletter and follow the podcast.

Transparency + Disclaimer

Educational content only. This newsletter does not constitute legal, medical, clinical, insurance, financial, or professional advice.

Drafted and refined with AI-assisted tools for synthesis and clarity. Final editorial control and responsibility remain with the author.

© 2026 Freddie Seba. All rights reserved.

AIGovernance #ResponsibleAI #BoardOversight #ExecutiveLeadership #AILeadership

AIandTrust #HealthcareAI #AIinEducation #SovereignAI #RiskManagement

DigitalTrust #EnergyAndAI #SiliconAlley #TechPolicy

References + Links + References

1) UK Government — AI Opportunities Action Plan: One Year On

https://www.gov.uk/government/publications/ai-opportunities-action-plan-one-year-on/ai-opportunities-action-plan-one-year-on

2) WSJ Opinion — NATO Has Seen the Future and Is Unprepared

https://www.wsj.com/opinion/nato-has-seen-the-future-and-is-unprepared-887eaf0f

3) Forbes — Forbes 250: America’s Greatest Innovators (Feb 11, 2026)

https://www.forbes.com/sites/alexknapp/2026/02/11/forbes-250-americas-greatest-innovators/

4) HBR — AI Doesn’t Reduce Work—It Intensifies It (Feb 9, 2026)

https://hbr.org/2026/02/ai-doesnt-reduce-work-it-intensifies-it

5) Stanford HAI — A New Economic World Order May Be Based on Sovereign AI… (Feb 6, 2026)

https://hai.stanford.edu/news/a-new-economic-world-order-may-be-based-on-sovereign-ai-and-midsized-nation-alliances

6) NVIDIA GTC — Financial Services Sessions (GTC 2026 signal)

https://www.nvidia.com/gtc/sessions/financial-services/?ncid=so-nvsh-775150-vt09&es_id=833385362c

7) NYT — AI Companies Are Eating Higher Education (Feb 12, 2026)

https://www.nytimes.com/2026/02/12/opinion/ai-companies-college-students.html?unlocked_article_code=1.LlA.VFML.8ZeafQPfbW22&smid=nytcore-ios-share

8) CVC — Safeguarding Academic Integrity in the Age of Agentic AI (PDF)

https://www.cvc.edu/wp-content/uploads/2025/11/Safeguarding-Academic-Integrity-in-the-Age-of-Agentic-AI.pdf

9) Stanford HAI Policy — Toward Responsible AI in Health Insurance Decision-Making (Feb 10, 2026)

https://hai.stanford.edu/policy/toward-responsible-ai-in-health-insurance-decision-making

10) NYT — AI. Is Making Doctors Answer a Question: What Are They Really Good For? (Feb 9, 2026)

https://www.nytimes.com/2026/02/09/health/ai-chatbots-doctors-medicine.html

11) NYT Well — Health Advice From A.I. Chatbots… (Feb 9, 2026)

https://www.nytimes.com/2026/02/09/well/chatgpt-health-advice.html

12) Platformer — Fake Uber Eats “Whistleblower” Hoax Debunked (Jan 2026)

https://www.platformer.news/fake-uber-eats-whisleblower-hoax-debunked/

13) TechCrunch — Former GitHub CEO raises record $60M dev tool seed round at $300M valuation (Feb 10, 2026)

https://techcrunch.com/2026/02/10/former-github-ceo-raises-record-60m-dev-tool-seed-round-at-300m-valuation/

14) JAMIA — What patients want from healthcare chatbots (Oct 2025)

https://academic.oup.com/jamia/article/32/11/1735/8275999

15) DeepMind — Accelerating mathematical and scientific discovery with Gemini Deep Think (Feb 2026)

https://deepmind.google/blog/accelerating-mathematical-and-scientific-discovery-with-gemini-deep-think/

16) Business Insider — Elon Musk’s xAI loses second cofounder in 48 hours (Feb 2026)

https://www.businessinsider.com/elon-musk-xai-loses-second-cofounder-jimmy-ba-2026-2

17) MacRumors — OpenAI’s Jony Ive-designed device delayed to 2027 (Feb 10, 2026)

https://www.macrumors.com/2026/02/10/openais-jony-ive-designed-device-delayed-to-2027/

18) EU Interoperable Europe — GENAI4LEX-B (AI-powered legislative support, Italian Chamber of Deputies)

https://interoperable-europe.ec.europa.eu/collection/public-sector-tech-watch/genai4lex-b-ai-powered-legislative-support-italian-chamber-deputies

Newsletter + Podcast hub:

https://freddieseba.com

One response to “Issue #57: “Acceleration Is Easy. Institutional Change Is Hard.””

I don’t even know how I ended up here, but I thought this post

was great. I do not know who you are but certainly you’re going to a famous blogger

if you are not already 😉 Cheers!